Research

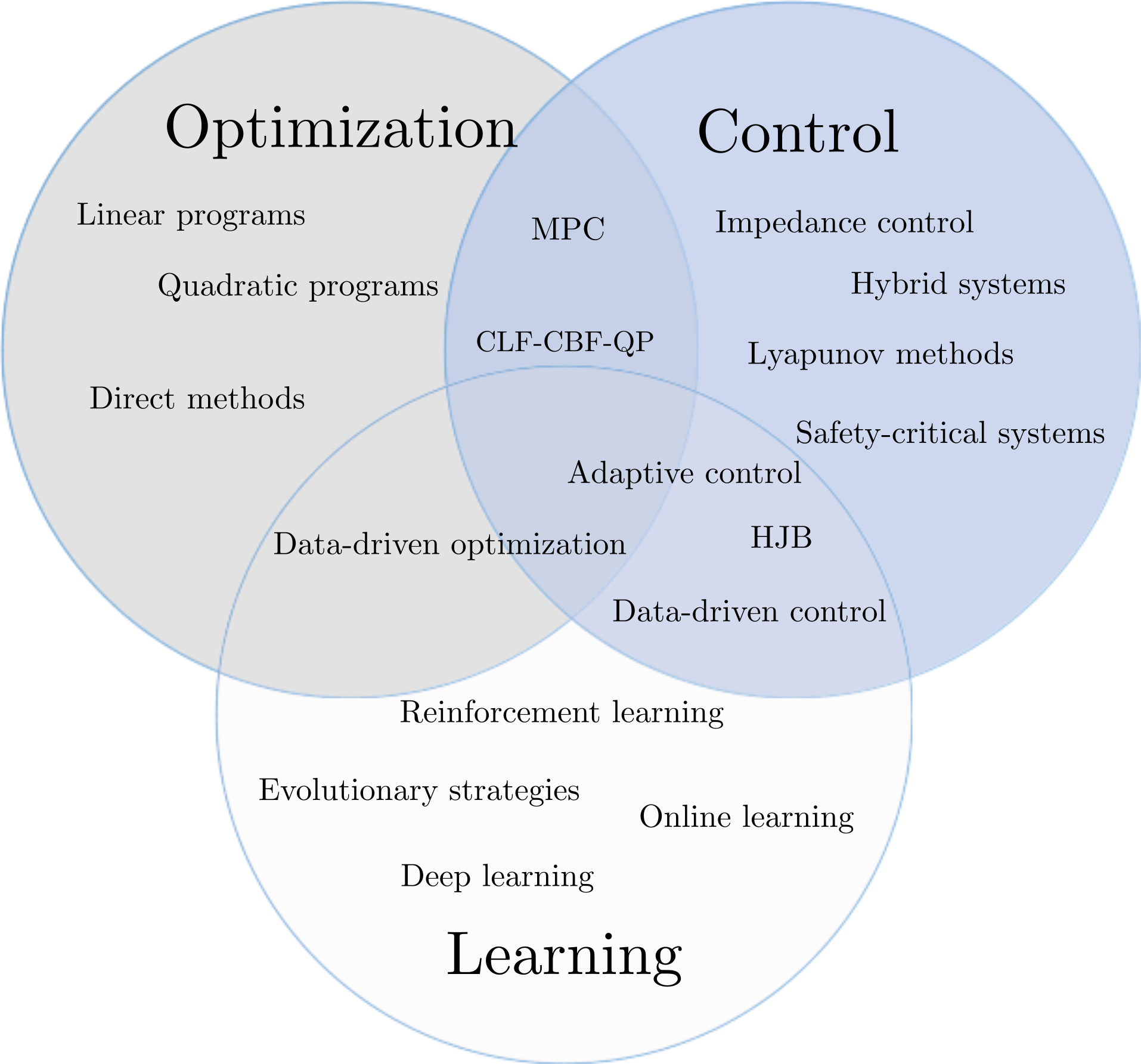

All of my research interests are centered around robotic systems, be it control or learning or optimization. The following slide shows some of the common terms that spawn my work.

Follow the lab webpage for our latest research upates: LINK

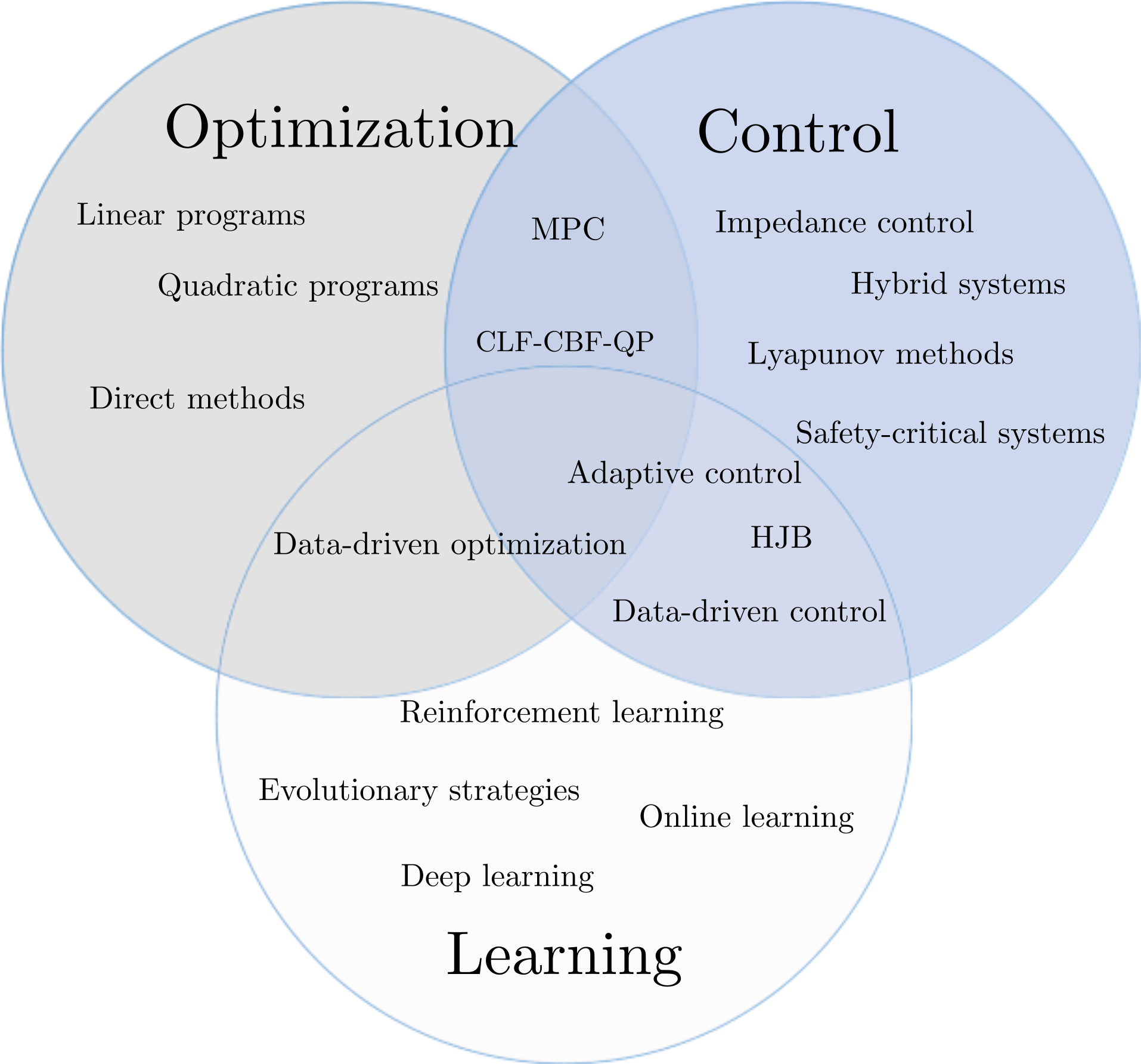

All of my research interests are centered around robotic systems, be it control or learning or optimization. The following slide shows some of the common terms that spawn my work.

Follow the lab webpage for our latest research upates: LINK